Spring 2026 Recap: NULab Hosts the DISKAH Fellows

On April 15, 2026, the NULab for Digital Humanities and Computational Social Science hosted the current Fellows of the UK Research and Innovation-funded Digital Skills in the Arts and Humanities (DISKAH) network for a hybrid presentation. The DISKAH fellows presented their digital humanities projects to faculty, students, and staff at NU London, Boston, and Oakland.

The first speaker, Dr. Brian Ball, is a Professor of Philosophy at Northeastern University London. Dr. Ball walked through the process of developing and applying python code for the PolyGraphs project. This research aims to understand the effects of mis- and dis-information on the beliefs of networked communities and people within those networks. For someone unfamiliar with network analyses for philosophical research, I was interested to learn how the intricacies of computational analysis affected the research process to understand individuals’ rational behavior and decision-making when encountering false information.

Dr. Ball coordinated with a colleague in computer science who had developed python code for simulating social distancing, which scales up well to large populations and networks. The PolyGraphs team first imported libraries related to research analyses, specifically the Polygraphs dot analysis processor library, which allows them to grab the needed data and load it to the program. The program produces simulations of the networks and agents within networks, and models their behavior over different operational conditions, such as the quality of information the agents in the network have. Dr. Ball reported the DISKAH fellowship helped him get better at analyzing the synthetic data generated from these simulations.

For each simulation the program produces a network graph with nodes and lines of connection among nodes. Sometimes a simulation runs over thousands of steps, whereas others run over fewer steps to reach a completed simulation. Overall, he used three categories of network simulations: Barabási Albert, Erdos Renyi random, and Stroganz networks, with each having a different network configuration. As agents flip coins in different networks, the nodes of the networks can maintain or change their beliefs depending on the various operational conditions. The iterated simulations produce a graph of how beliefs in truth change over time.

Dr. Ball shared his notebook for attendees to run the code themselves. The project’s analyses compared simulations where agents were more gullible versus those where agents were skeptical of information. When there are a lot of unreliable agents in the network, this skeptical attitude slows down the spread of mis- and dis-information.

One attendee asked how the project chose probabilities of agents being reliable. Dr. Ball explained he identified these probabilities by choosing a value and exploring the impact through iterated simulations. The project assistant started with a probability of 0.75 and explored 0.5 intervals up to see the effect on networks. In addition, the project looked to modern empirical data to align the simulated probabilities with reality; for example, using the percentage of reliable news sources in Ukraine to get a rough estimate of probable, reliable agents.

The next speaker, Dr. Gabriel Egan is a Professor of Shakespeare Studies at DeMontfort University. His research focused on computational stylistics of Shakespeare’s writings. This work stems from the idea that computational analyses can help researchers identify distinctive writing styles, and how impersonators can use these styles and mannerisms to mimic writers. The hundred most-used words in early modern drama can reveal the distinctive writing styles of individuals (Figure 1). We each have preferences for using some of these words over others. From these habits, and with enough textual data on authors’ complete works, scholars can identify writing styles with 80–90% reliability.

In practice, Dr. Egan’s team reviewed works attributed to Shakespeare as well as related, contemporaneous works to understand Shakespeare’s and his counterparts’ writing styles. Dr. Egan’s team mapped and weighted the occurrences of the common words within five words of each other to create Word Adjacency Networks. The diagram captures the unique habits of authors to use certain words within clusters (Figure 2). Dr. Egan explained that beyond five words, the distinctive styles of authors are less noticeable and lost in the broader context. The intent is to create these Word Adjacency networks for authors’ complete works and compare them to credibly attribute authorship. In addition, the project developed tables tracking the probabilities of the common words usage within five words of each other.

Furthermore, he tracked the likeness of authors’ word adjacency networks. Through this process, he can see which works are most like the author’s other works, and which seem to be a mix of authors’ styles. Notably, those works that scholars already know or suspect as being co-authored with Shakespeare, appeared less like Shakespeare’s other works and confirmed knowledge that these works represent more than one distinct writing style. Egan’s team is applying the analysis further to identify which portions of plays and other writings are authored by other writers, and which sections of the works are authored by Shakespeare. Dr. Egan noted he needed a large corpus of writings to be able to identify distinctive sections of plays, and explained that he experimented with dividing up the writings in different ways to help narrow attribution.

Attendees were curious whether authors intentionally chose a particular clustering of common words, and if there was semantic value in these writing styles. Dr. Egan had the view that the semantic value is only there when impersonators are mimicking the style of others. Moreover, Dr. Egan asserted that authors’ writing styles and choices to use common words is purely subconscious, with slight impacts from common phrases and literary devices, such as the practice of beginning poems with “and.” Another attendee asked about AI writing styles, and whether this style of analysis could be applied to identifying unique styles of AI-generated outputs. Dr. Egan suspects that AI models have average writing styles and that it would likely be harder to catch distinctive indicators of AI-generated content now that models are more sophisticated.

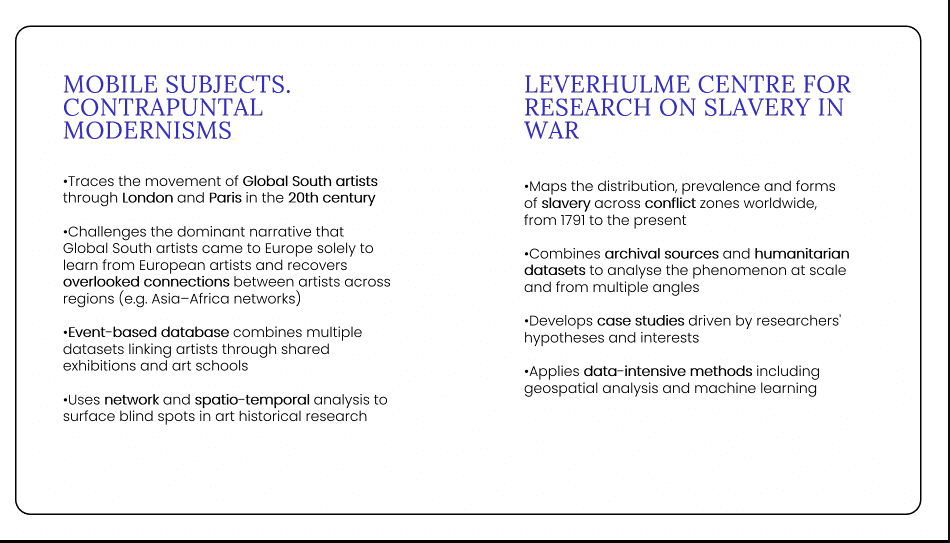

The next speaker, Dr. Maribel Hidalgo Urbaneja, was a Postdoctoral Researcher at the University of the Arts, London and is now a research associate at Kings College London’s Digital Humanities Center and Leverhulme Centre for Research on Slavery in War. Her presentation focused on the learnings gained from the DISKAH Fellowship as she shifted positions to now researching modern conflicts and enslavement with data-centered methods.

Dr. Urbaneja worked on two projects through the DISKAH fellowship using digital research infrastructure and high performance computing. One focused on the movement and network of Global South artists in London and Paris in the 20th century. This project used a dataset of 12,000 artists over 500 events. Her findings challenge a paternalistic narrative that global-majority artists came to Europe to learn from western artists only. It brings to light the connections among African and Asian artists, and explores how artistic inspiration evolved during this period for artists from the Global South.

The second project initiates part of the Leverhulme Centre’s new research. The Centre is welcoming scholars from different backgrounds, and Dr. Urbaneja applied the reflexive learning approach gained from the DISKAH fellowship’s summer school to developing the Centre’s research focus. The Centre’s goal is to map the prevalence of enslavement and conflicts from 1791 to present using archival sources and humanitarian data sets. I found it interesting that the Centre is mapping and analyzing the phenomenon of enslavement across multiple angles to understand its scale and spread over time.

The Fellowship gave Dr. Urbaneja insight into how digital research infrastructure can be applied and built to develop the Centre’s case studies. For example, using high performance computing to map sites of slavery, or topic model numerous legal and court proceedings. In addition, she spoke how her own learning through the DISKAH fellowship can improve knowledge sharing and engagement, by identifying and questioning researchers’ discipline-specific assumptions and practices. Dr. Urbaneja is applying these reflexive research principles to survey the Centre’s researchers and create a collaborative environment for data-centered research over the next ten years. Attendees asked how junior scholars in the humanities can get involved in DH without becoming a computer scientist. Dr. Urbaneja recommended using DH conferences and summer schools to learn specific skills to apply where traditional humanities methods are insufficient.

The final speaker, Dr. Jasper van der Klis is a Postdoctoral Researcher at Guildhall School of Music. This research focuses on Italian composer Domenico Scarlatti. The composer wrote numerous works while teaching wealthy patrons, and his works were immensely popular during his heyday. The viral nature of Scarlatti’s works made them ripe for copying and impersonation. However, scholars today do not know why or how Scarlatti’s works spiked in popularity, and most of the knowledge around the composer comes from secondary writings and primary keyboard compositions. The prevalence of individuals copying the composer’s works and/or suspected to be Scarlatti requires analysis to identify the original compositions. Dr. van der Klis is applying phylogenetic analytical methods to map Scarletti’s compositions. To test this method, he worked with a colleague to apply phylogenetic methods to 15th century Spanish poetry compositions. This produces a representation of the connections among word uses.

For music, Dr. van der Klis mapped connections among notes in works known to be composed by Scarlatti, as well as copies of the composer’s works which contain musical variations of the original compositions. This work requires manual data analysis to identify variants in copies of Scarlatti’s work (Figure 4). Dr. van der Klis explained that the method cannot be automated because of the time required to transcribe musical compositions to textual data and find variants rather than easily, visually spotting variations with human review of compositions. However, once variants are manually identified, they can be catalogued into a variant dictionary to categorize types of musical variants. The variants include added and removed rests, added or changed notes, and other changes to the note sheet. By inputting the compositions into a script that tracks the identified variants, Dr. van der Klis was able to speed up the time required to analyse compositions, as well as test the compositions under various configurations. Through this process, his team discovered each composition requires different configurations of variants. The primary goal of the research was less about the music itself, and more about “who copied the work, why, when, and what they had in front of them at that time.”

Dr. van der Klis also explained some of the archival archaeology that went into identifying copiers of Scarlatti’s work. He visited musical archives to view the compositions in person, and noted differences in the works’ organization with archives, the types of paper the compositions were written on, and other details that if not for manual study, would be missed. These details helped pinpoint a copier of Scarlatti’s works, who at one point was considered to be Scarlatti himself because of how well he copied the composer’s music. It was fascinating to hear how Dr. van der Klis solved the case: the paper the presumed copy was written on was produced several years after Scarlatti’s death, and could not be an original.

This rigorous approach extended to working with large language models to identify variants across compositions. He identified instances where the model seemingly diverted from the researcher’s prompts and provided unnecessary information. As a result, Dr. van der Klis liked to understand each line of script, and find places in open-source code where rules around language embedded in the scripts affected the output. I enjoyed hearing about this thorough and intentional research method, especially given the current debates around unquestioning AI use.

Overall, I learned a lot through the DISKAH Fellows’ presentations on applying digital skills to data-intensive research, and enjoyed the speakers’ reflections on their Fellowship experiences.